Vibe Coding Made Me Ship More Projects Than I Could Track Manually

AI coding tools made it easy to launch more side projects than human dashboard workflows can keep up with. Agent Analytics gives your existing AI agent one measurement layer across all of them.

Vibe coding changed how I build.

I can go from idea to live project much faster now. The bottleneck is no longer shipping. The bottleneck is knowing what is happening after I ship.

If I launch one project, a normal dashboard is fine. If AI lets me launch five, eight, or twelve small projects, landing pages, experiments, and side bets, I am not going to open five, eight, or twelve dashboards every morning.

That is the real shift.

Vibe coding made me ship more projects than I could track manually.

Dashboards Are Good. Human Dashboard Workflows Are the Problem.

Dashboards exist for a reason. They help humans inspect traffic, compare periods, and understand behavior. Tools like Plausible, PostHog, Mixpanel, Umami, and Google Analytics all make sense if a human is the one doing the checking.

The problem is that vibe coding changes the ratio.

One person can now launch far more projects than one person can comfortably monitor by hand. So the thing that stops scaling is not data collection. It is human attention.

I still want to know:

- which project is quietly starting to grow

- which landing page is getting traffic but not converting

- which source is sending real users instead of junk clicks

- where people drop off in the funnel

- whether an experiment actually improved anything

But I do not want that knowledge badly enough to log into a pile of separate dashboards every day.

That is where the old workflow breaks.

Vibe Coding Made Human Analytics Workflows Stop Scaling

When I shipped more slowly, manual analytics made sense. I had fewer live projects, fewer domains, fewer experiments, and fewer places to remember to check.

Now the surface area is much bigger.

AI coding tools like Claude Code, Cursor, Codex, OpenClaw, and similar workflows make it easy to spin up:

- a new SaaS idea

- a waitlist page

- a docs site

- a pricing test

- a side tool

- a niche landing page for a specific audience

That is good. More shots on goal is good.

But every extra project creates another place where useful signals can hide. A small but growing project needs more watching, not less, because early signals are weak and easy to miss.

That is why I keep coming back to this line:

Vibe coding made human analytics workflows stop scaling.

If you only have one main product, human-first analytics tools are often enough. If you have a portfolio of projects, the experience starts to break. Data is not missing. It is trapped behind workflows you do not want to repeat often enough.

What I Actually Wanted Was One Agent Watching Everything

I did not want a new AI service. I already had the AI part.

I already use AI coding tools to ship. What I was missing was the measurement layer that my existing AI agent could inspect, compare, and act on.

I did not need another dashboard trying to get me to log in more often.

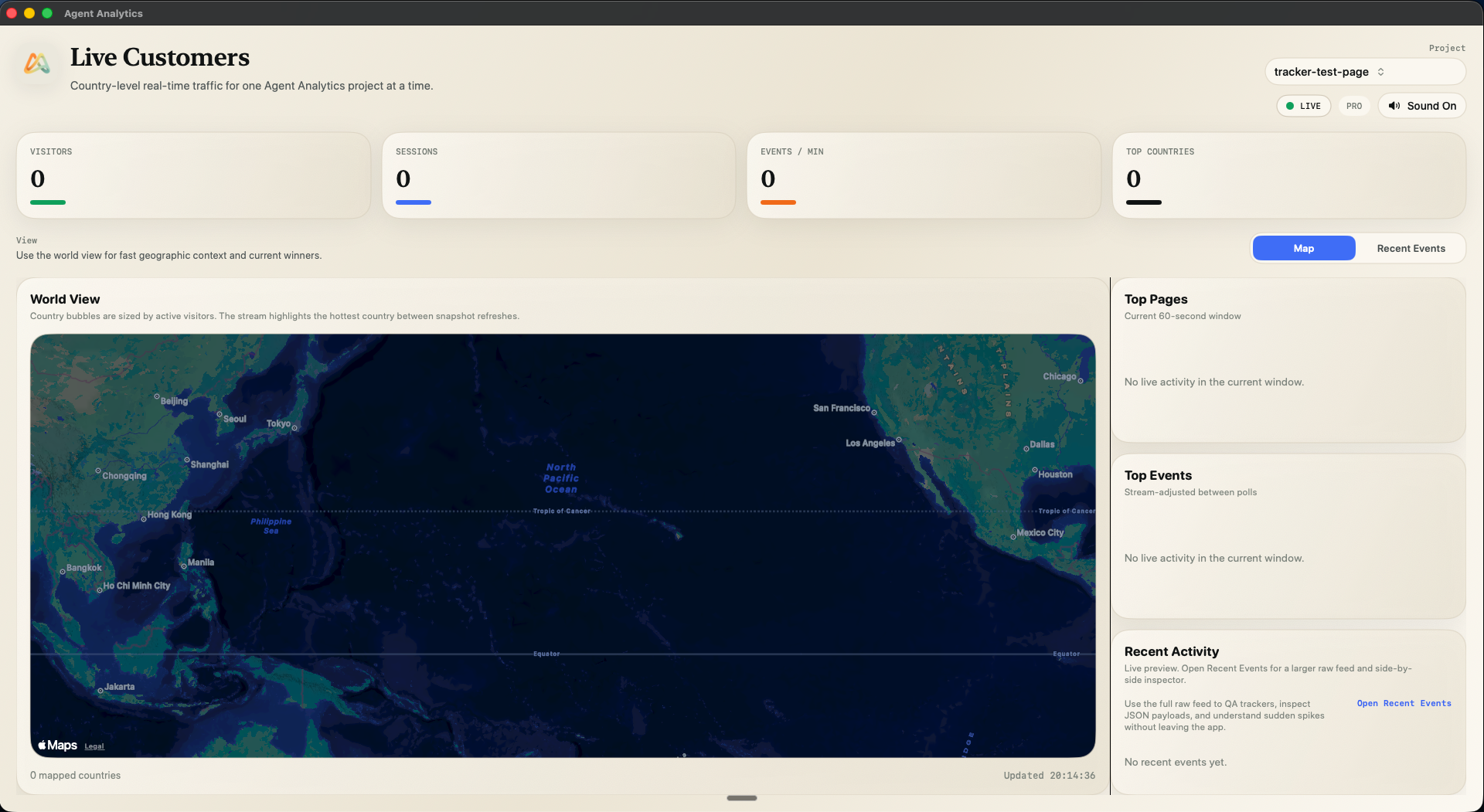

I needed analytics that worked with the agent I already use:

- one place to track projects

- one API the agent can query

- one layer for page views, events, funnels, retention, and experiments

- one cross-project view of what changed

- one system the agent can inspect without browser logins or dashboard babysitting

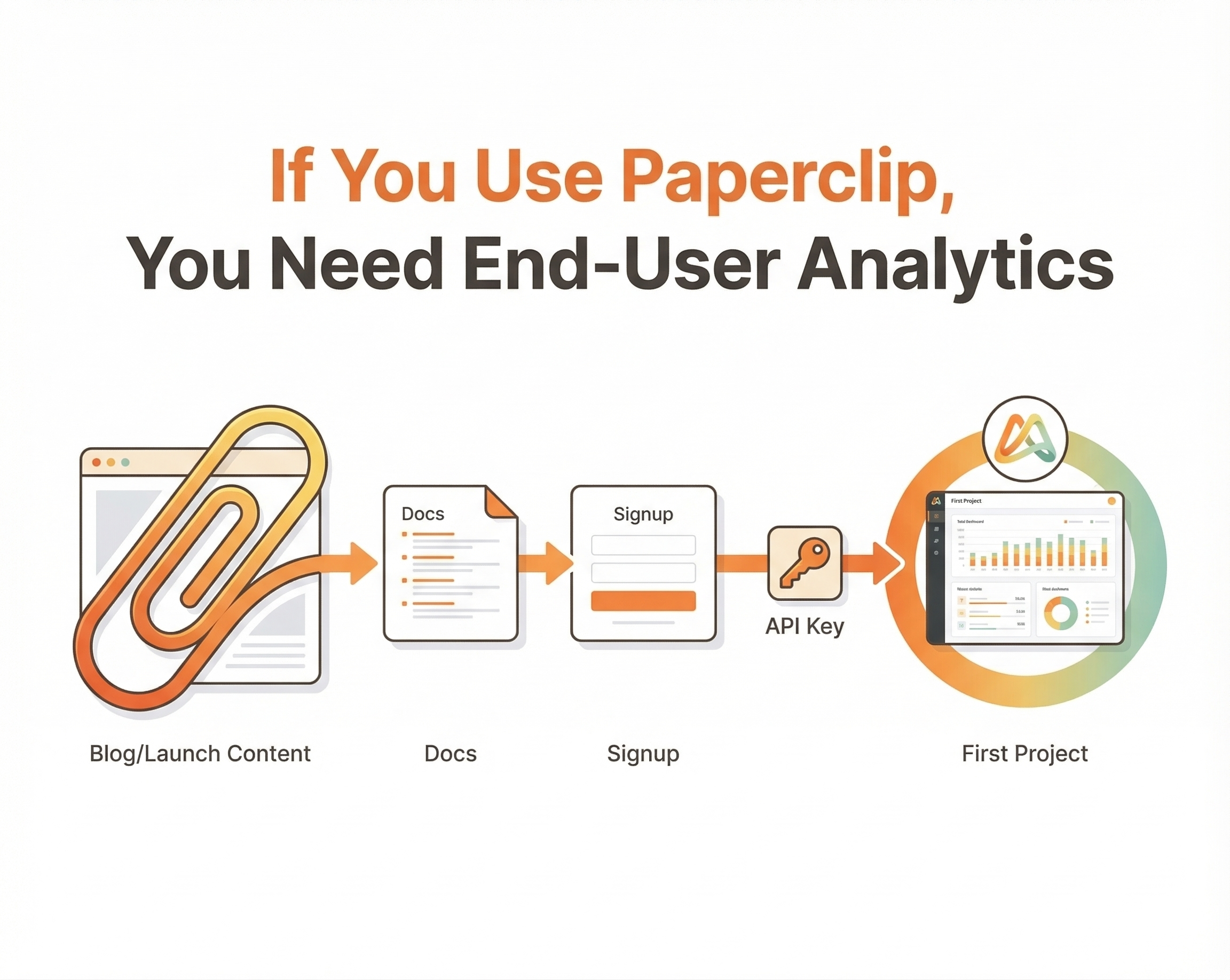

This is exactly why we built Agent Analytics.

Agent Analytics is not an AI agent. It is the analytics layer for the AI agent you already have.

If you are already using Claude Code, Cursor, Codex, OpenClaw, or a similar workflow, the point is not to replace that. The point is to give that existing agent access to the measurement it needs.

Why This Works Better Than Manual Checking

Once analytics becomes agent-readable, the workflow changes.

Instead of opening dashboards and deciding where to click, I can have my agent:

- compare all my projects and tell me what changed this week

- flag the project that is starting to get traction

- show which channel drove useful traffic

- inspect a funnel and tell me where the biggest drop-off is

- monitor an experiment and report whether a variant is winning

- tell me which project deserves attention today

That is a much better fit for how I actually work now.

The agent handles the repetitive inspection. I handle the decisions.

That is what I mean when I say analytics should be built for machines, not just dashboards.

The Cure Is Not Fewer Projects. It Is Better Instrumentation for the Agent You Already Use.

The wrong response to vibe coding is to tell builders to slow down. The whole point is that we can ship more.

The right response is to make measurement scale with that new reality.

For me, that means:

- keep shipping quickly

- instrument projects consistently

- let my AI agent monitor all of them

- get one readable summary instead of a manual dashboard tour

- use funnels, retention, and experiments to decide what to improve next

That closes the growth loop in a way human-only analytics workflows never really could.

Where Normal Analytics Tools Still Fit

Traditional analytics tools are still useful if you want a human-first dashboard:

- Plausible for simple, privacy-friendly traffic analytics

- Umami for a lightweight open source option

- PostHog for deeper product analytics

- Mixpanel for mature teams with heavier analytics needs

- Google Analytics if you are already in that ecosystem

But those tools were built around a human opening a product and inspecting data visually.

My problem was different.

I wanted analytics that an agent could use as part of a workflow: compare across projects, watch over time, support experiments, and help answer what to do next.

That is the gap Agent Analytics is built for.

If Vibe Coding Helped You Ship More, Your Analytics Has to Evolve Too

Vibe coding is making builders more productive. That naturally means more projects, more launches, more tests, and more chances to find something that works.

It also means the old model of manually checking dashboards stops scaling much sooner.

So if you are feeling the pain, I do not think the answer is to become more disciplined about logging into more tools.

I think the answer is to give your existing AI agent the measurement layer.

That is what I wanted for myself, and that is what Agent Analytics is built for.

Because shipping got easier.

Now measurement has to catch up.

Read next: How My AI Agent Runs A/B Tests Across 5 Projects While I Sleep