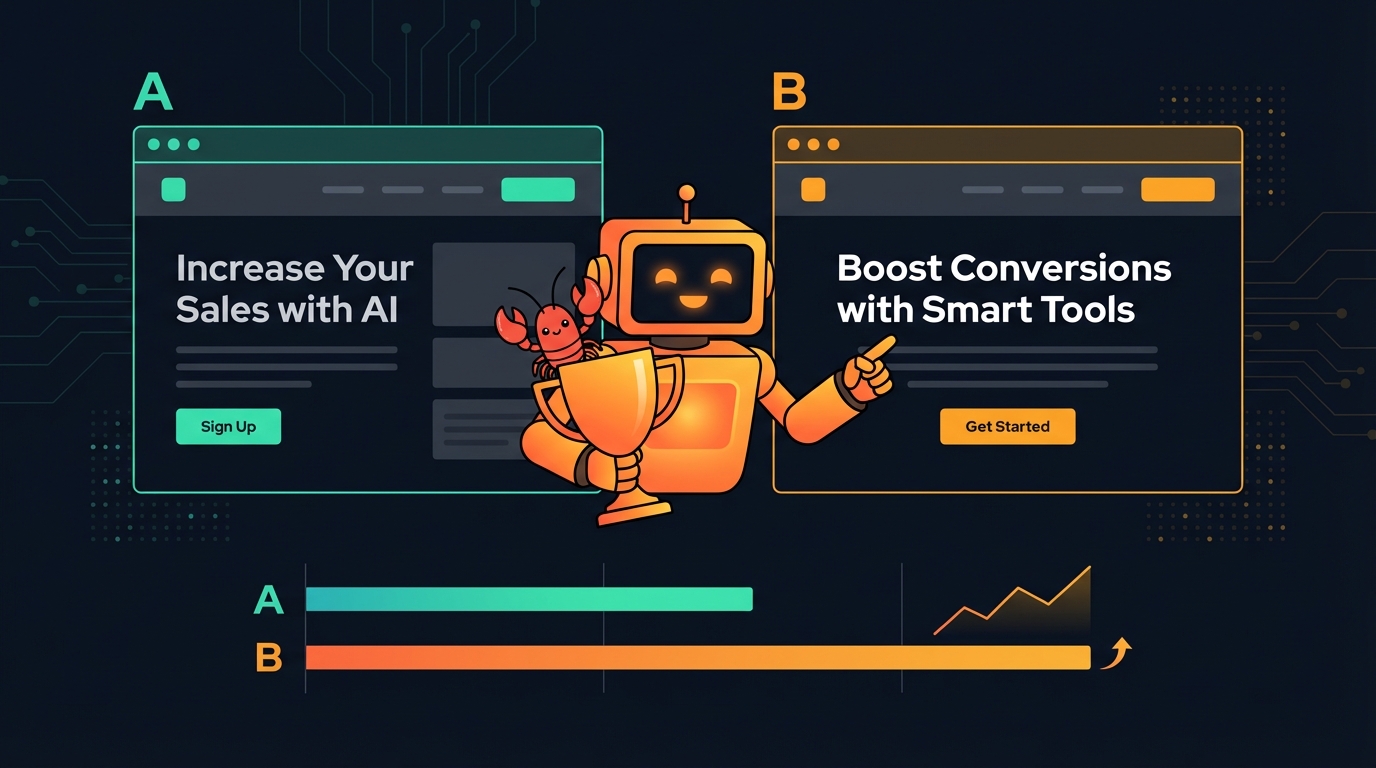

🦞 A/B Testing Your AI Agent Can Actually Use

Run browser-side experiments with declarative HTML variants, no heavy SDK, and an API your agent can drive end-to-end. Create tests, QA variants, read results, and ship winners.

You want to test whether “Start Free Trial” converts better than “Sign Up.” With most A/B tools, that means a dashboard, an SDK, a snippet, and another place to remember to check later.

What if your AI agent could handle the whole loop?

A/B Testing, Agent-First

Agent Analytics has built-in browser-side experiments that your agent can create, wire up, QA, and review through the same API/CLI workflow it already uses for analytics.

That matters because the hard part usually isn’t launching a test. It’s remembering to:

- define a real goal

- wire the variant correctly

- QA both branches

- come back and decide what to ship

Agent Analytics is designed so your agent can do that work with you.

What makes it different

- Declarative variants — for most tests, add

data-aa-experimentanddata-aa-variant-*attributes directly in HTML. - Goal-first workflow — create the experiment around a real business event like

signuporcheckout, not just page views. - QA-friendly forcing — load any variant directly with a URL param like

?aa_variant_signup_cta=new_cta. - Agent-driven lifecycle — create via CLI, MCP, or API; read results later and decide whether to keep running, pause, or complete the test.

- Programmatic fallback — when text swaps are too limiting, use

window.aa?.experiment()for more dynamic UI changes.

Experiments are available on paid plans. If you still need the base tracker install, start with the Getting Started guide.

The recommended workflow

The current docs recommend a simple pattern:

- Pick one UI element to test

- Pick one goal event that reflects a real outcome

- Create the experiment

- Wire the variant declaratively where possible

- QA both variants with forced URLs

- Read results and decide whether to keep running, pause, or complete it

That sounds obvious, but it’s what keeps tests interpretable.

Step 1: Choose one narrow change and one real goal

Good first experiments are usually a single CTA, headline, or pricing message tied to a real event.

Examples of good goals:

signupcheckouttrial_started- another core activation event

Avoid using page_view as the goal unless the page view itself is the outcome you care about.

A good prompt for your agent:

“I want to run an A/B test on my-site. Help me choose one page element to test and one goal event that reflects a real business outcome. Keep the scope to a single CTA or headline.”

Step 2: Make sure the goal event is already tracked

Before you create the experiment, make sure the goal event exists and is named consistently.

For simple interactions, the tracker docs now recommend declarative event tracking in markup instead of custom click handlers.

Example:

<button data-aa-event="signup" data-aa-event-plan="pro">

Sign up for Pro

</button>That sends a signup event with { plan: "pro" }.

If you prefer, your agent can also use window.aa?.track() when the event depends on runtime state.

Step 3: Create the experiment

Tell your agent:

“Create an experiment for the signup CTA on my-site with control and new_cta variants, using signup as the goal event.”

Or do it directly with the CLI:

npx @agent-analytics/cli experiments create my-site \

--name signup_cta --variants control,new_cta --goal signupA couple of small but important details from the docs:

- Keep experiment names in

snake_case - Keep the scope easy to explain later

signup_ctais much better than something vague likehomepage_test

Step 4: Wire the variant declaratively in HTML

For most sites, this is the cleanest path. The existing text stays as control, and the variant lives in a data-aa-variant-* attribute.

<h1 data-aa-experiment="signup_cta"

data-aa-variant-new_cta="Start free today">

Start your free trial

</h1>How it works:

- the original element content is the control

data-aa-variant-{key}provides the replacement text for a non-control variant- multiple elements can share the same experiment name and stay in the same assigned variant

Example with multiple elements tied to one experiment:

<h1 data-aa-experiment="hero_test"

data-aa-variant-b="Ship faster with AI">

Build better products

</h1>

<p data-aa-experiment="hero_test"

data-aa-variant-b="Your agent handles analytics while you code">

Track what matters across all your projects

</p>Want more than two variants? Add more data-aa-variant-* attributes.

<h1 data-aa-experiment="cta_test"

data-aa-variant-b="Try it free"

data-aa-variant-c="Get started now">

Sign up today

</h1>Step 5: Add the anti-flicker snippet

If you’re using declarative experiments, add this in <head> before tracker.js so visitors don’t briefly see the control before the assigned variant is applied:

<style>

.aa-loading [data-aa-experiment] {

visibility: hidden !important;

}

</style>

<script>

document.documentElement.classList.add('aa-loading');

setTimeout(function () {

document.documentElement.classList.remove('aa-loading');

}, 3000);

</script>A few details worth calling out:

- it uses

visibility: hidden, notdisplay: none, so layout stays stable - it only hides experiment elements, not the whole page

- the 3-second timeout is a safety fallback if config loading fails

tracker.jsremovesaa-loadingafter variants are applied

Step 6: QA both variants before sending real traffic

This part was missing from a lot of older experiment workflows, and it should not be skipped.

You can force variants with URL params:

?aa_variant_signup_cta=control?aa_variant_signup_cta=new_cta

That makes it easy for your agent or your team to:

- check that both versions actually render

- verify the goal event still fires

- confirm there are no broken styles or copy issues

A useful prompt:

“Show me how to force each variant locally so I can QA both versions, then verify that the signup event still fires correctly for each one.”

Step 7: Read results and make a decision

Once the experiment has real traffic, ask your agent:

“Check results for signup_cta and tell me whether we have enough data to pick a winner. If we do, recommend whether to keep running it, pause it, or complete it with a winner.”

Or check directly:

npx @agent-analytics/cli experiments get exp_abc123The results include per-variant exposures, unique users, conversions, conversion rate, probability_best, lift, whether there is sufficient_data, and a recommendation.

Example shape:

signup_cta experiment results:

control — 2.1% conversion | baseline

new_cta — 3.8% conversion | +81% lift | 96% probability best

Recommendation: ship new_ctaThe key point: make the decision on the goal event, not on raw traffic.

A variant with more exposures is not automatically better if it does not improve the thing you actually care about.

The agent loop is the real advantage

The nice part is not just that experiments exist. It’s that your agent can operate the whole loop:

- Create — define the test and goal

- Implement — wire the markup or programmatic variant logic

- QA — force both variants and verify tracking

- Monitor — check results later through API, CLI, or MCP

- Decide — recommend keep running, pause, or complete with a winner

- Repeat — suggest the next focused test

That is the actual difference between “we launched a test” and “we built an experimentation habit.”

For more complex UI changes

If you need more than text replacement — different components, layout changes, conditional rendering, image swaps — use the programmatic API:

var variant = window.aa?.experiment('signup_cta', ['control', 'new_cta']);

if (variant === 'new_cta') {

document.querySelector('.cta').textContent = 'Start Free Trial';

document.querySelector('.hero-img').src = '/trial-hero.png';

}window.aa?.experiment() gives deterministic client-side assignment, so the same user keeps seeing the same variant.

Use this when declarative HTML isn’t enough. For simple copy tests, declarative attributes are still the best default.

Common mistakes to avoid

Based on the current docs, these are the big ones:

- testing too many elements at once

- using

page_viewas the goal instead of a conversion event - creating the experiment before the goal event is actually tracked

- forgetting to QA forced variants before sending traffic through the test

- bundling copy, layout, and offer changes into one experiment

- leaving an experiment active after you already know the winner

Why this fits agents better than dashboard-first tools

Traditional A/B platforms assume a human will keep opening the dashboard.

Agent Analytics assumes your agent can:

- create the experiment

- update the code

- verify both branches

- query results later

- tell you what to do next

That makes experiments much easier to keep running in practice.

Get started

- Start here — AI Agent Experiment Tracking

- Tracker reference — Tracker.js

- Access modes — CLI vs MCP vs API

- Full API reference — Experiments API

- Create an account — app.agentanalytics.sh

- Install the skill — ask your agent to install from ClawHub

- Self-host — it’s open source on GitHub

Previously: Set Up Agent Analytics with OpenClaw (5 Minutes)