🦞 The Bullseye Method for Technical Indie Hackers

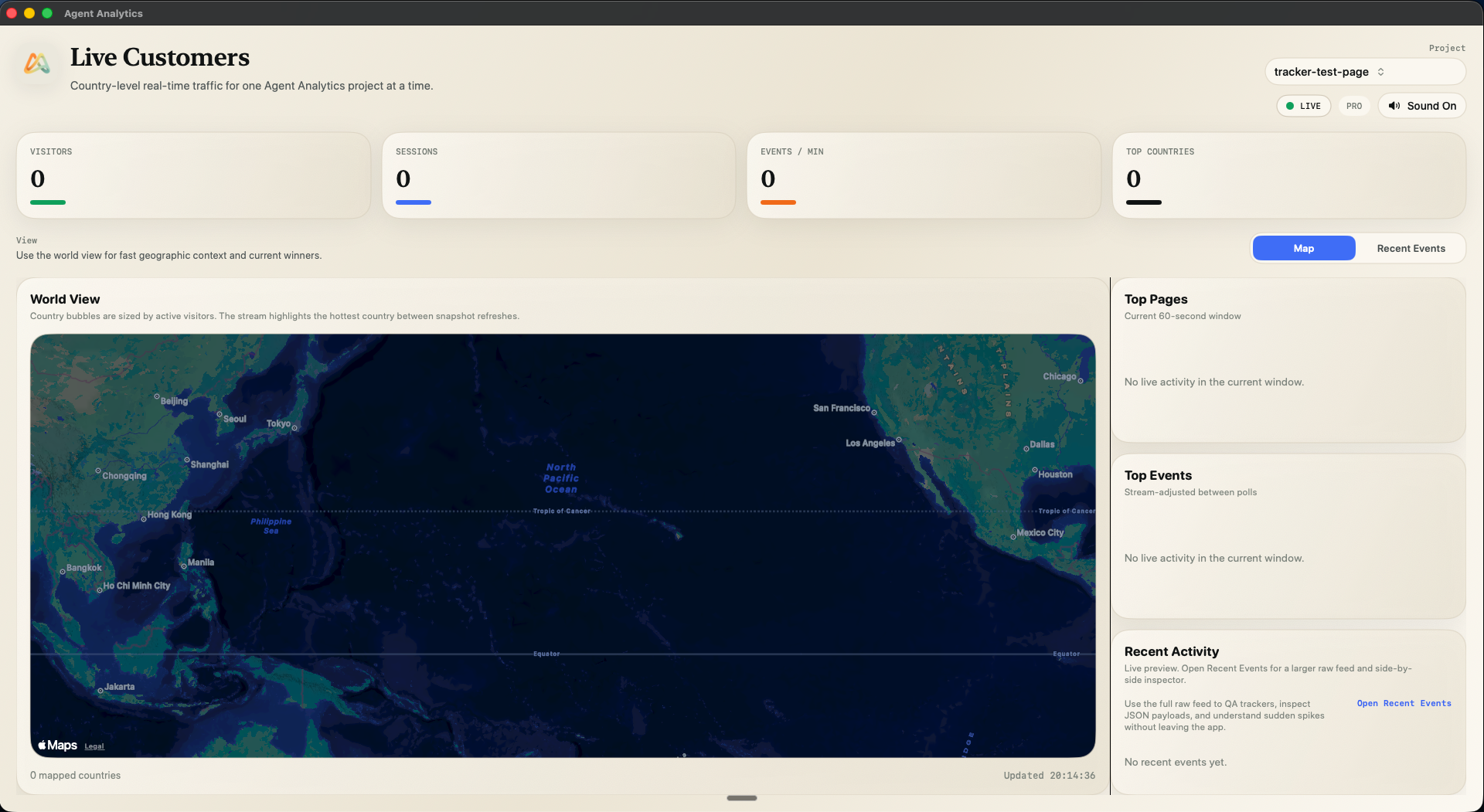

Use OpenClaw to brainstorm and test growth channels, then use Agent Analytics to measure what actually drives activated users.

Most technical founders don’t have a shipping problem. They have a channel selection problem.

You can build features in hours now. Distribution is the bottleneck.

That’s why Traction still matters. The Bullseye method is simple:

- test multiple plausible channels,

- measure outcomes consistently,

- focus on the one channel that proves itself now.

In 2026, this maps perfectly to our stack:

- OpenClaw does research + execution,

- Agent Analytics gives measurement + feedback,

- you run a weekly KEEP / KILL loop from evidence (not vibes).

The classic idea (without the fluff)

Bullseye is not “pick your favorite growth channel.” It’s:

- Brainstorm broadly

- Shortlist realistically

- Test quickly

- Focus aggressively

Different summaries describe different step counts, but the behavior is the same:

Broad exploration → narrow focus.

Why this fits agent-native builders

If you already use OpenClaw/Claude Code/Cursor workflows, your bottleneck is no longer execution speed.

Your agent can now:

- map competitor channel presence,

- generate channel hypotheses,

- prep assets and UTM links,

- run tests,

- report which channel produced activated users.

That turns Bullseye from a theory into a repeatable operating system.

Step 1) Agent-assisted channel brainstorming (evidence first)

Use this prompt pattern:

Analyze our product, audience (technical indie hackers + micro-SaaS founders), and top competitors. Propose 10 acquisition channels ranked by speed-to-signal in the next 14 days. For each include: why now, 7-day test, effort, expected quality, kill condition.

Force output into this table:

| Channel | Why now | 7-day test | Effort | Success metric | Kill condition |

|---|

No matrix = no test.

Step 2) Competitor channel reconnaissance with OpenClaw

Before choosing channels, pull public evidence.

Check:

- Blog cadence + topics

- Docs / integration footprint

- Community presence (Reddit/HN/Discord)

- Comparison / SEO pages

- Launch and update patterns

Example evidence table (directional only):

| Competitor | Evidence URL | Observed channel | Signal |

|---|---|---|---|

| Plausible | https://plausible.io/blog | Content/blog channel | Active blog surface |

| Plausible | https://plausible.io/docs | Docs-led channel | Public docs hub |

| PostHog | https://posthog.com/blog | Content/blog channel | Active blog surface |

| PostHog | https://posthog.com/docs | Docs-led channel | Public docs hub |

| Umami | https://umami.is/blog | Content/blog channel | Active blog surface |

| Umami | https://umami.is/docs | Docs-led channel | Public docs hub |

Important: this shows channel presence, not channel performance. Use it to prioritize what to test, not blindly copy.

Step 3) Build a practical shortlist for Agent Analytics

Start with channels that fit technical buyers:

- Developer communities (Reddit/HN/niche Discords)

- Tactical blog guides (implementation-first)

- SEO/comparison pages (high intent)

- Integrations/partner ecosystems

- Email to existing audience

- Small paid tests (strict kill rules)

Quick rule-of-thumb:

- Need fast signal → communities + micro paid tests

- Need compounding growth → SEO + blog + integrations

- Need trust transfer → deep guides + docs + demos

Step 4) Measure channel quality with Agent Analytics

This is the key positioning:

We are not “the tactic.” We are the measurement layer that makes tactics agent-operable.

Use one shared funnel across all channel tests:

page_view -> cta_click -> signup_completed -> project_created

Attach attribution to every campaign link:

utm_sourceutm_mediumutm_campaign

Judge channel winners by activated-user quality, not raw clicks.

Need help getting OpenClaw connected first? Start here: 🦞 Set Up Agent Analytics with OpenClaw (5 Minutes)

Step 5) Weekly Bullseye loop (KEEP / KILL)

Monday

- Agent delivers 10-channel matrix + competitor evidence

- You choose top 3 channels

Tuesday

- Prepare links/assets, verify tracking

Wednesday–Friday

- Run 3 micro-tests

Saturday

- Agent delivers comparison report (funnel + activation + early retention)

Sunday

- KEEP 1 winner

- KILL/PARK 2 losers

- Queue 2 new tests

This is how Bullseye compounds.

Weekly command checklist (copy/paste workflow)

- “OpenClaw, generate top 10 channel hypotheses with kill conditions.”

- “OpenClaw, map competitor channel evidence with URLs.”

- “OpenClaw, create 3 test plans for this week.”

- “OpenClaw, produce UTM link set for each test.”

- “OpenClaw, summarize channel performance by activation funnel.”

- “OpenClaw, recommend KEEP/KILL with confidence notes.”

Common mistakes

- Picking winners by clicks only

- Testing too many channels at once

- Running tests without kill conditions

- Using inconsistent funnel definitions per channel

- No weekly cadence

Final framing

- Bullseye = the classic strategy

- OpenClaw = execution engine

- Agent Analytics = measurement + decision loop

That combination is the point: your agent can run growth work, not just write code.

If you like this approach, next read: Talk to Your Analytics

Source appendix

Bullseye / Traction

- https://traction.usefedora.com/p/traction-a-startup-guide-to-getting-customers

- https://books.google.com/books/about/Traction.html?id=A3_MBgAAQBAJ

- https://www.penguin.co.uk/books/289806/traction-by-mares-gabriel-weinberg-and-justin/9780241242537

Attribution standard

Agent Analytics references

- https://agentanalytics.sh/

- https://blog.agentanalytics.sh/blog/setup-agent-analytics-with-openclaw/

- https://blog.agentanalytics.sh/blog/talk-to-your-analytics/

Competitor recon examples

- https://plausible.io/blog

- https://plausible.io/docs

- https://posthog.com/blog

- https://posthog.com/docs

- https://umami.is/blog

- https://umami.is/docs