🦞 Analytics Closes the Agent Feedback Loop

AI systems improve when action and consequence stay connected. Analytics is the measurement layer that tells your agent what happened after it shipped.

Your agent rewrites a headline, deploys the change, and messages you:

“Shipped.”

Cool.

Did signups go up? Did more people click the CTA? Did retention improve, or did the new copy attract the wrong users?

If your agent can’t answer those questions, it didn’t complete a feedback loop. It completed a task.

That’s the difference.

AI Agents Need Tight Feedback Loops

AI systems get useful when the gap between action and consequence gets short.

A useful agent loop has 3 basic steps:

- Take an action — edit a page, change onboarding, launch a campaign

- Observe what happened — traffic, clicks, signups, retention, experiment results

- Update the next action — keep, kill, test, iterate

That is how humans improve. It’s how growth teams improve. It’s how agents improve too.

Without observing what happened, the system is flying blind.

Your agent can write code all day. It can deploy 20 changes before lunch. But if it has no reliable way to measure outcomes, it can’t really learn. It can only keep shipping and hope.

Where Most Agent Workflows Break

This is the part a lot of agent demos skip.

The agent writes code. Great. The agent opens a PR. Great. The agent deploys the change. Great.

Then the loop goes dark.

What happened after the deploy?

Most analytics tools assume a human will open a dashboard, click around, build a report, compare time ranges, and decide what to do next. That breaks the loop for agents.

Agents don’t want dashboards. They need structured outcome signals they can query programmatically.

Not screenshots. Not “someone should check Mixpanel later.” Not a browser session that expires every day.

They need an API, a CLI, or MCP tools that let them answer the question that matters:

“Did the thing I just changed make the product better?”

Analytics Is the Outcome Signal

This is why analytics matters so much in the agent era.

Analytics is not just reporting. It’s the part of the loop that tells the system what happened in the real world after it acted.

For an agent, that means:

- Stats tell it whether a change moved anything at all

- Funnels tell it where users drop off

- Retention tells it whether the improvement actually sticks

- Experiments tell it which variant wins

- Breakdowns tell it which audience, page, source, or device is driving the change

That is the measurement layer.

It’s also an important distinction: this is not about monitoring the agent itself. We are not talking about token usage, internal traces, or agent observability.

We’re talking about product and user outcomes.

The agent changed something. Analytics tells it what happened next.

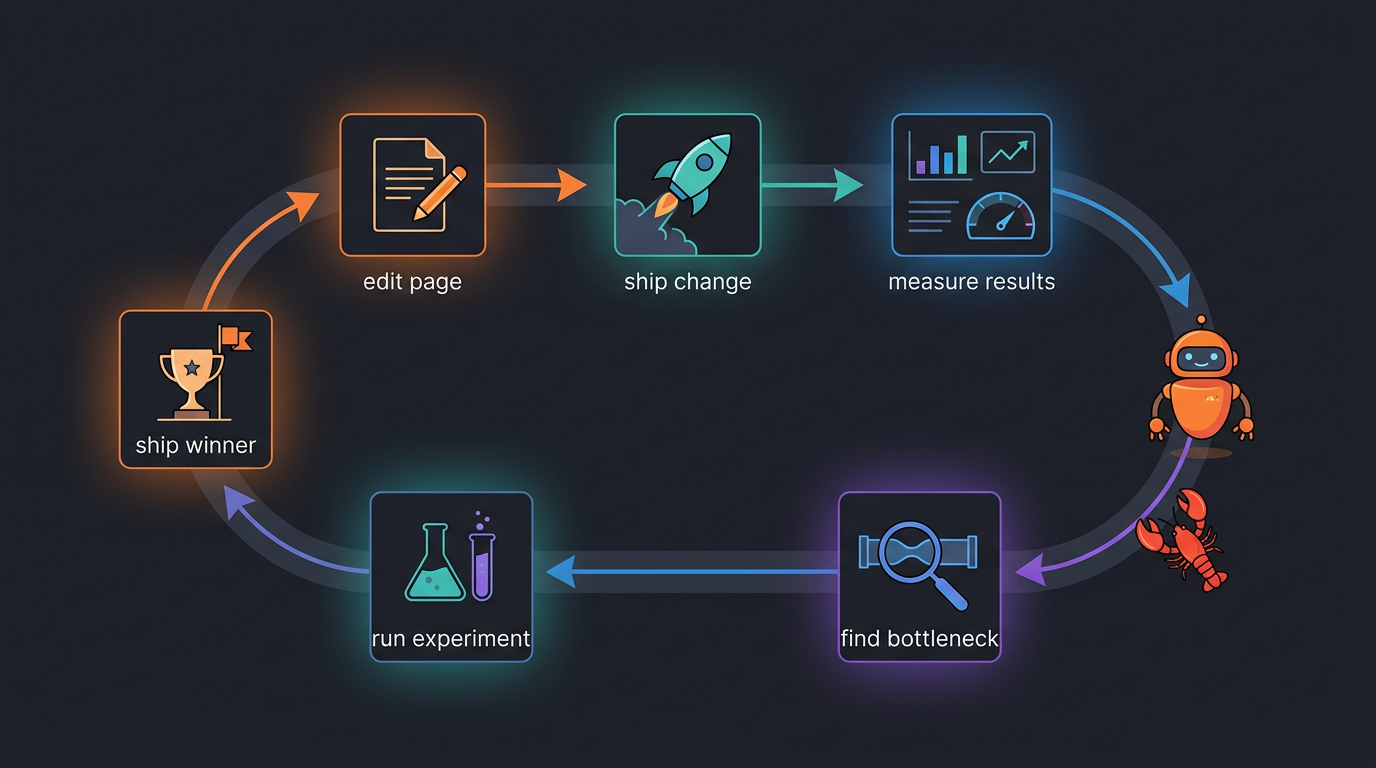

The Loop, Closed

Here is what a real agent feedback loop looks like in practice:

- Agent edits a landing page

- Agent ships the change

- Agent measures results — traffic, CTA clicks, signup rate

- Agent finds the bottleneck — maybe the CTA improved but signup completion got worse

- Agent creates an experiment to test the next fix

- Agent measures again and ships the winner

Then it repeats.

This is the important part: the loop stays closed because measurement is built into the workflow. No human has to remember to log in and inspect a dashboard before the next decision gets made.

A Simple Example

Let’s say your agent changes the hero copy on your homepage.

A weak workflow looks like this:

- ship new copy

- wait a few days

- maybe remember to check analytics later

- guess whether the change worked

A tight loop looks like this:

- ship new copy

- check page performance 24 hours later

- compare CTA click rate vs previous period

- check the funnel from

page_view -> cta_click -> signup - see whether the new copy improved top-of-funnel clicks but hurt signup completion

- test a more specific CTA next

That second workflow compounds.

The first one produces activity. The second one produces learning.

Why Dashboards Break the Loop

Dashboards are fine for humans. But they’re a bad dependency for autonomous systems.

A dashboard is built around the assumption that someone will:

- remember to open it

- know what question to ask

- click through the right filters

- interpret the result manually

- pass the answer back into the next decision

That’s a lot of friction.

A tight feedback loop needs the opposite:

- low-latency access to results

- structured outputs the agent can reason about

- repeatable queries the agent can run on its own

- clear success/failure signals tied to real goals

That is why agent-native analytics matters. It keeps the measure step inside the loop.

What This Unlocks

Once your agent can measure outcomes directly, it stops being just a coding tool.

It becomes a growth operator.

Now it can:

- check every project each morning and tell you what changed

- monitor funnels and find the biggest drop-off

- compare channels by signups, activation, or retention

- run experiments and ship winners

- tell you not just what happened, but what to do next

That is the real promise here.

Not “AI can write code faster.”

The bigger shift is:

your agent can now act, measure, and adapt inside the same system.

Get Started

If you want to set this up today:

- Sign up at app.agentanalytics.sh

- Start here: 🦞 Set Up Agent Analytics with OpenClaw (5 Minutes)

- Read next: Talk to Your Analytics

Because shipping faster is only half the story.

The systems that win are the ones that can learn faster too.

Previously: Grow Your Projects from Claude Desktop · Funnels: See Where Users Drop Off · A/B Testing Your AI Agent Can Actually Use