Teach Your AI Agent AIDA for Landing Page Analytics

AIDA is old. The new workflow is asking your AI agent to map Attention, Interest, Desire, and Action to live landing-page analytics before it writes variants.

GuideGuides, comparisons, and product notes on open source website analytics, analytics APIs, self-hosted setups, and Plausible or Mixpanel alternatives.

This blog covers analytics for AI agents: API-first measurement, open source website analytics, analytics APIs, and self-hosted analytics workflows for builders who want their agent to query outcomes instead of reading dashboards.

If you are looking for a Plausible alternative or Mixpanel alternative, start with the posts below, then compare the product shape against the API docs and the Mixpanel comparison.

AIDA is old. The new workflow is asking your AI agent to map Attention, Interest, Desire, and Action to live landing-page analytics before it writes variants.

Guide

Segmentation, targeting, and positioning matter more when your AI agent can create endless pages. Use STP to force one segment, one target, one promise, and one measurable activation loop.

Guide

YC's AI-native company advice points to one practical operating model: measurable surfaces, project context, portfolio context, and analytics your existing AI agents can use when deciding what to do next.

Essay

Hermes just released Subagent Delegation. Agent Analytics used that exact model in its skill for growth audits, and our dogfood run produced 140% more growth bets, 140% more evidence references, and 125% higher output density.

Announcement

Hermes can handle your projects, ship changes, and keep context. Agent-readable analytics closes the loop so Hermes can see what users actually did after the work shipped.

Guide

When an AI agent uses your product, the upgrade moment should carry the task context, the paid capability, and a safe human approval handoff.

Announcement

A practical model for agent-readable analytics: portfolios connect related projects, projects keep local product truth, and surfaces are where users encounter each project.

Announcement

Agent Analytics turns your public site into a prioritized measurement plan and shows your coding agent the data you are not collecting yet.

Announcement

LLM judges are useful for generating pressure, but they drift when they judge themselves. The better loop ships variants, waits for real behavior, and starts the next round from evidence.

Guide

If Claude Code, Codex, OpenClaw, Paperclip, or another AI agent uses your product, feedback should not be a UI form. Give the agent a documented endpoint.

Guide

A safe login pattern for AI agents: the human owns identity, the agent owns the work, and no one pastes API keys into chat.

Guide

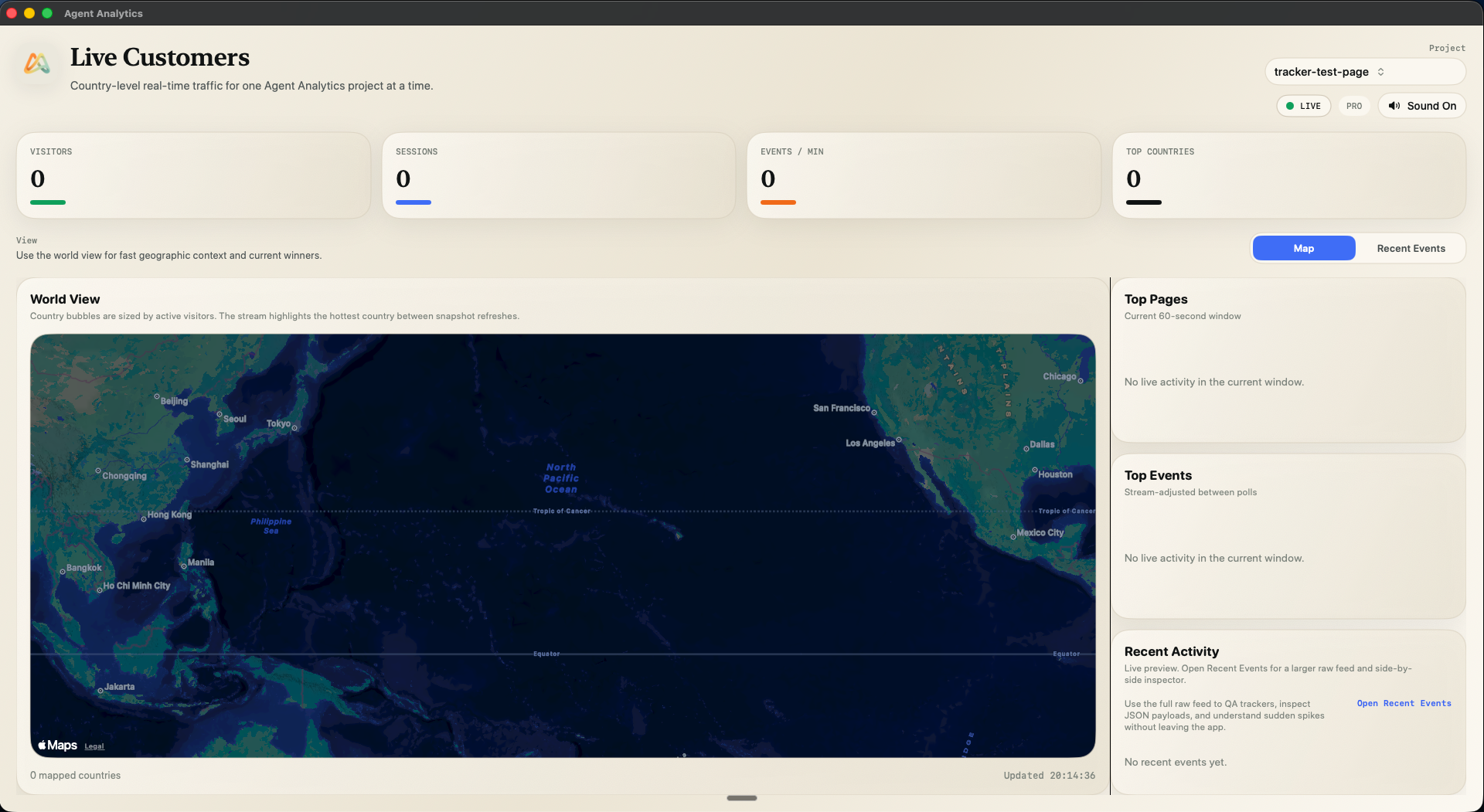

Session paths make entry pages, exit pages, and the steps between agent-readable, so your agent knows what to investigate next.

Guide

🗄️ Cabinet gives you the knowledge base and AI team. Agent-readable analytics gives your Data Analyst real user outcomes to measure and improve.

Guide

A practical comparison of Plausible, Umami, PostHog, Mixpanel, Google Analytics, and Agent Analytics for AI-built side projects and agent-led workflows.

Guide

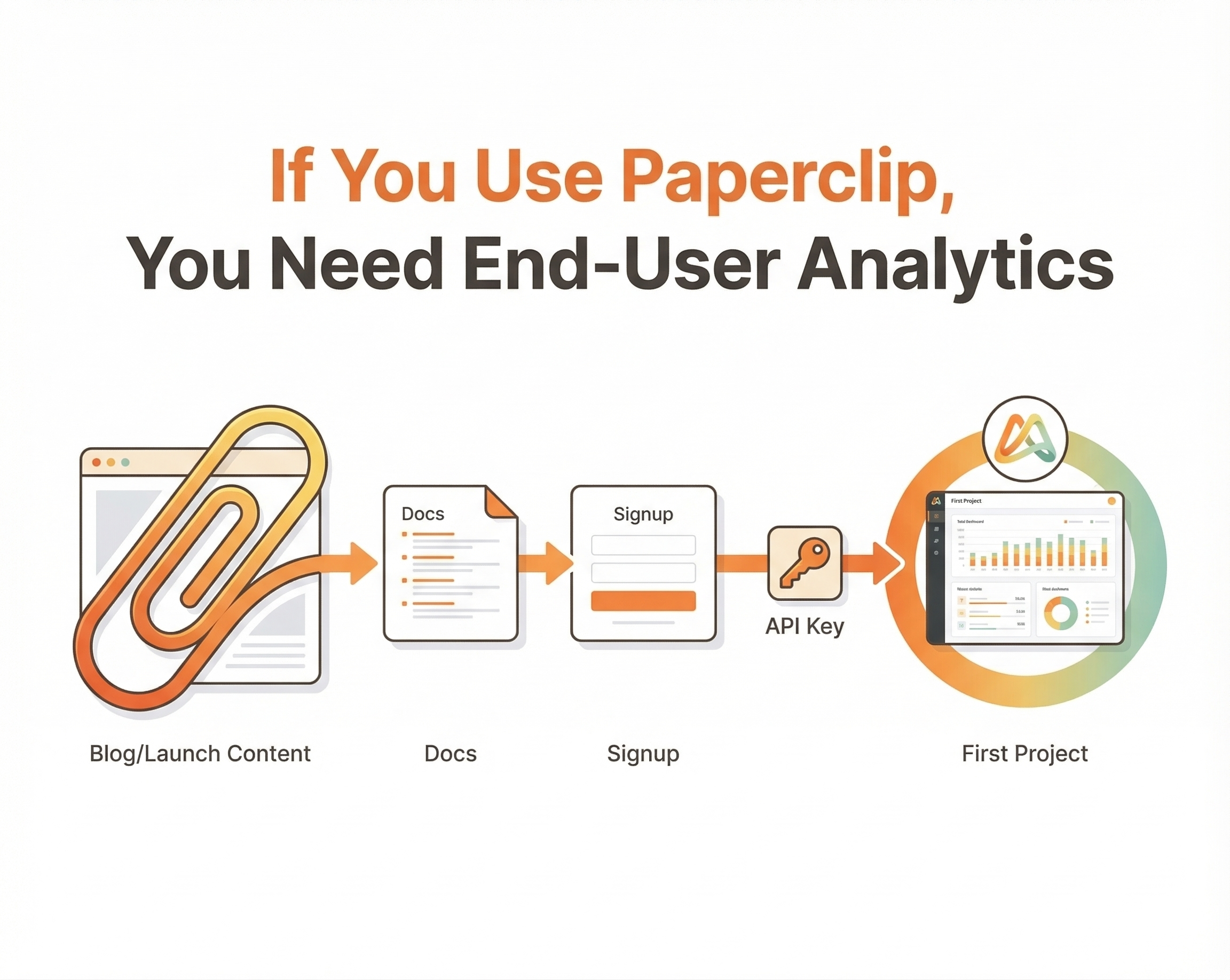

📎Paperclip orchestrates zero-human companies. Agent-readable analytics shows whether users reached install, signup, API keys, and first project.

GuideAI coding tools made it easy to launch more side projects than dashboard workflows can track. Agent Analytics gives your AI agent one measurement layer.

Guide

A faster way to watch live traffic, QA trackers, and keep real-time context available for you and the agents helping you ship.

Story

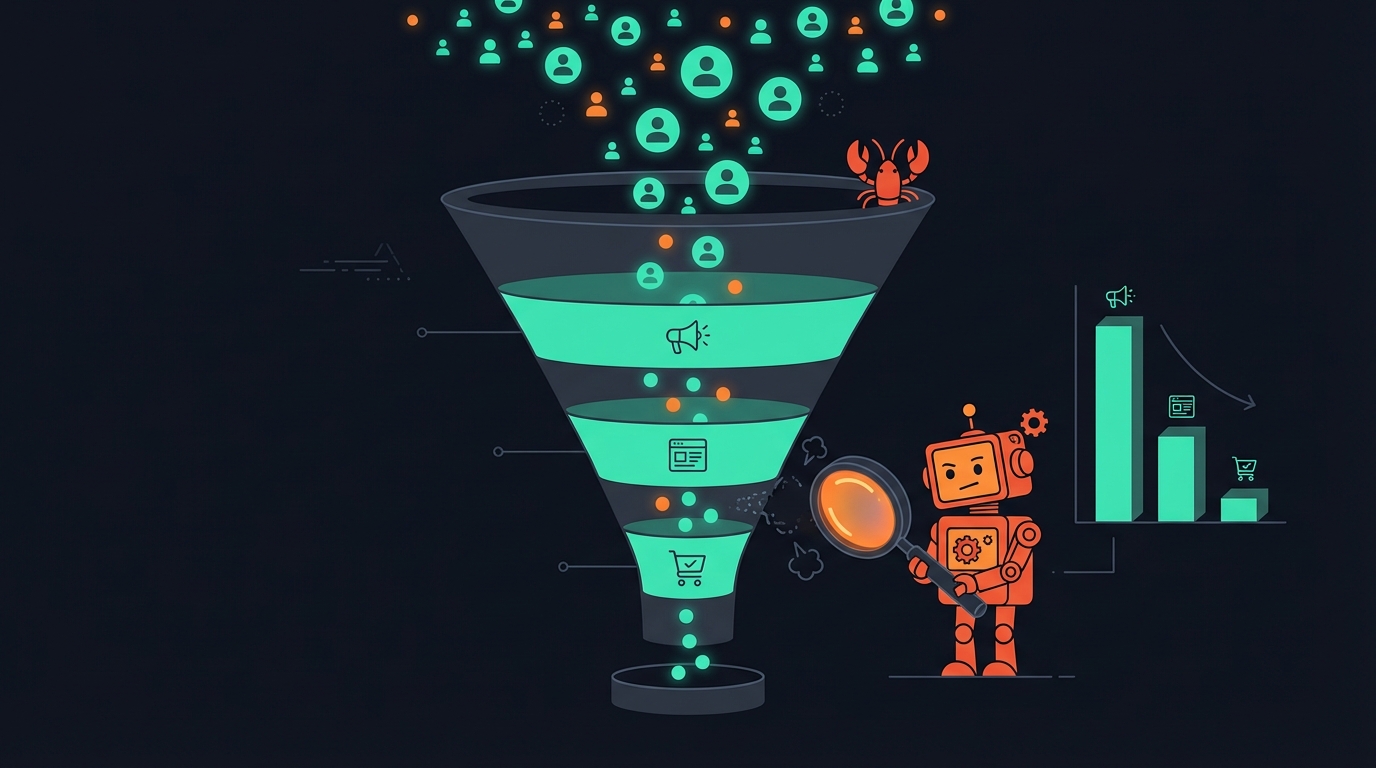

Use Agent Analytics with OpenClaw, Claude Code, Cursor, Codex, or any coding agent to diagnose which part of your growth loop is broken.

Guide

GPT-5.4 improved the capabilities that make AI-agent growth analysis work: long multi-step tasks, tool use, and polished business outputs.

Guide

AI systems improve when action and consequence stay connected. Analytics is the measurement layer that tells your agent what happened after it shipped.

Guide

Use OpenClaw as an AI growth agent to explore channels, then use Agent Analytics to measure what actually drives activated users.

Guide

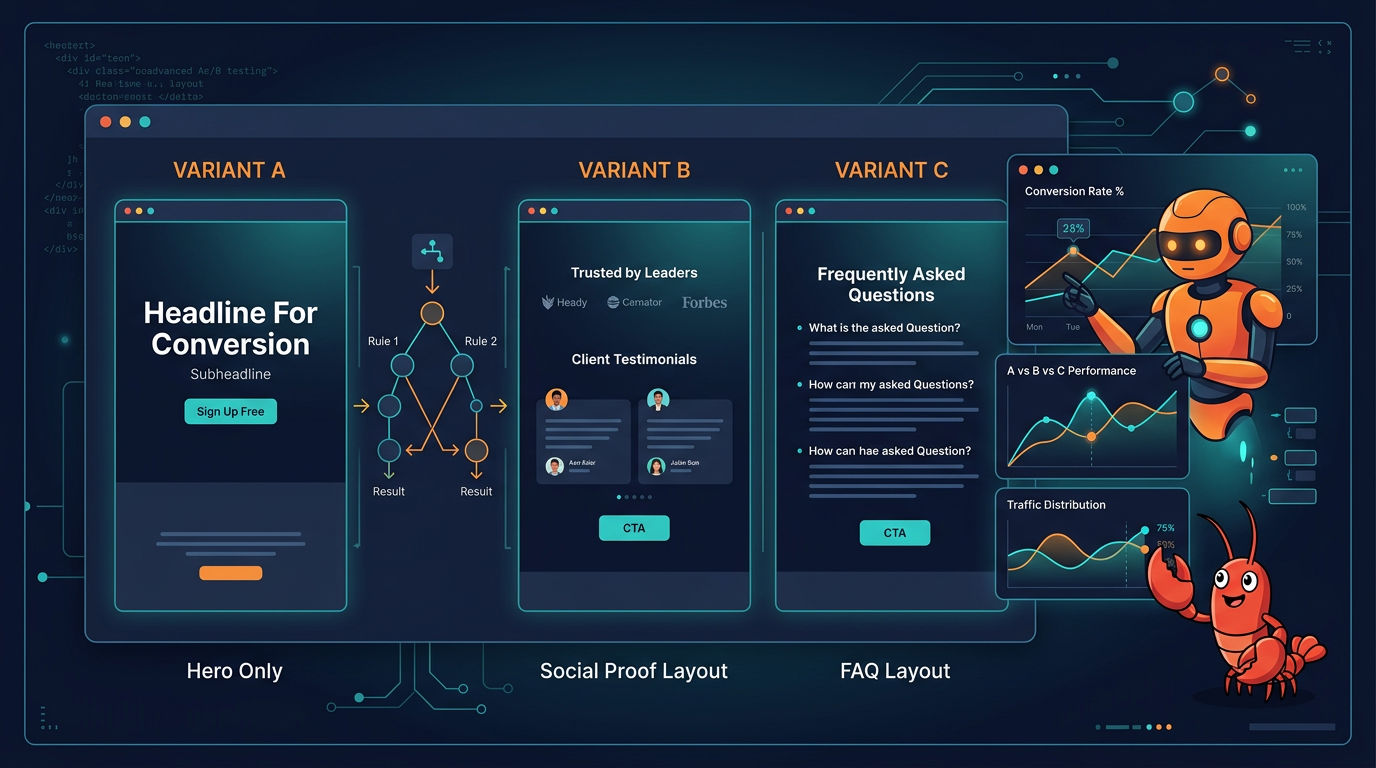

Go beyond headline swaps. Test full sections, conditional experiences, and multi-step flows your AI agent can measure and improve.

Guide

Use Claude Desktop as a growth agent to query analytics, spot bottlenecks, run experiments, and iterate without leaving your chat.

GuideDeclarative events, opt-in browser signals, privacy guardrails, and a public browser smoke suite make the Agent Analytics tracker safer for Claude Code, Codex, Cursor, and your AI agent.

Announcement

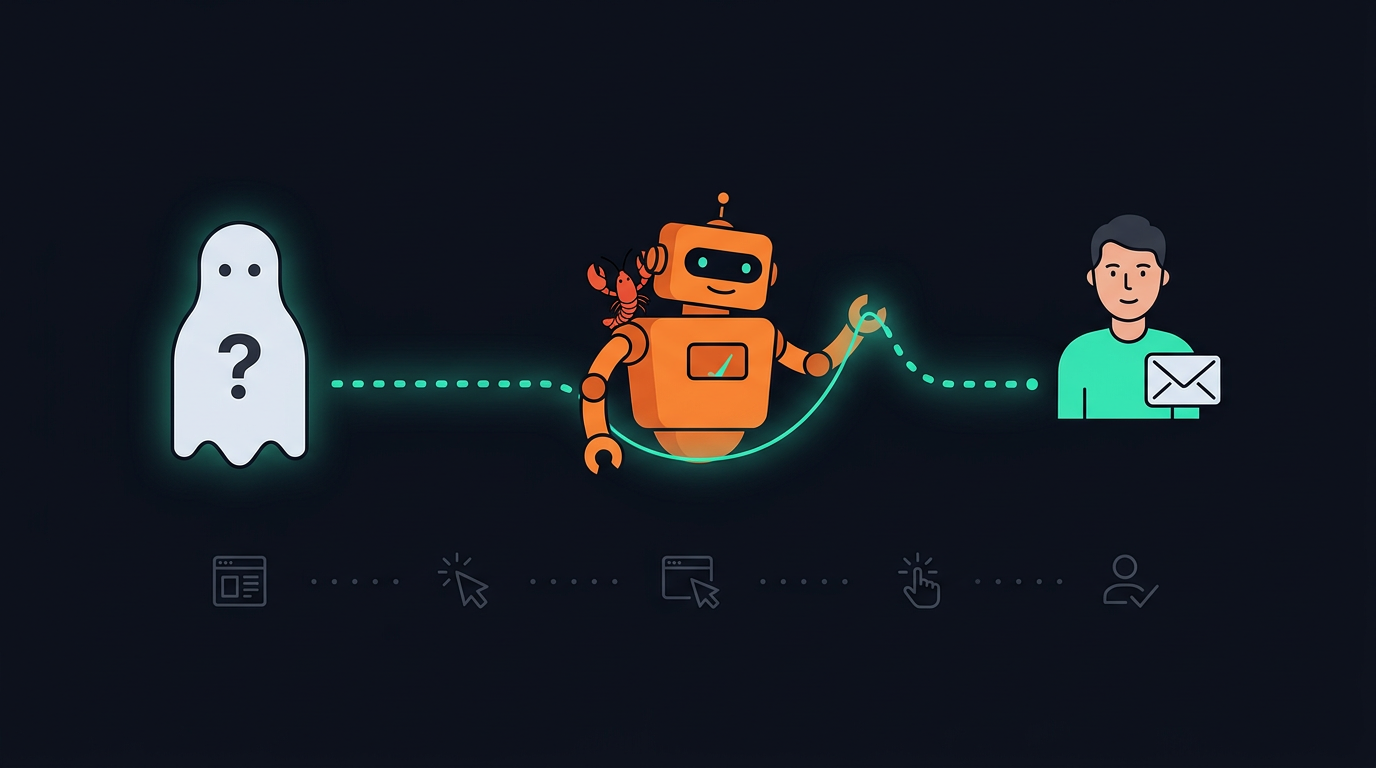

Identity stitching connects anonymous and signed-in behavior so your analytics, and the agents querying it, see one user journey.

Guide

Step-by-step conversion analysis your AI agent can query. Find the bottleneck, fix it, measure again.

Guide

Run browser-side experiments with declarative HTML variants, no heavy SDK, and an API your agent can drive from setup to winner.

Guide

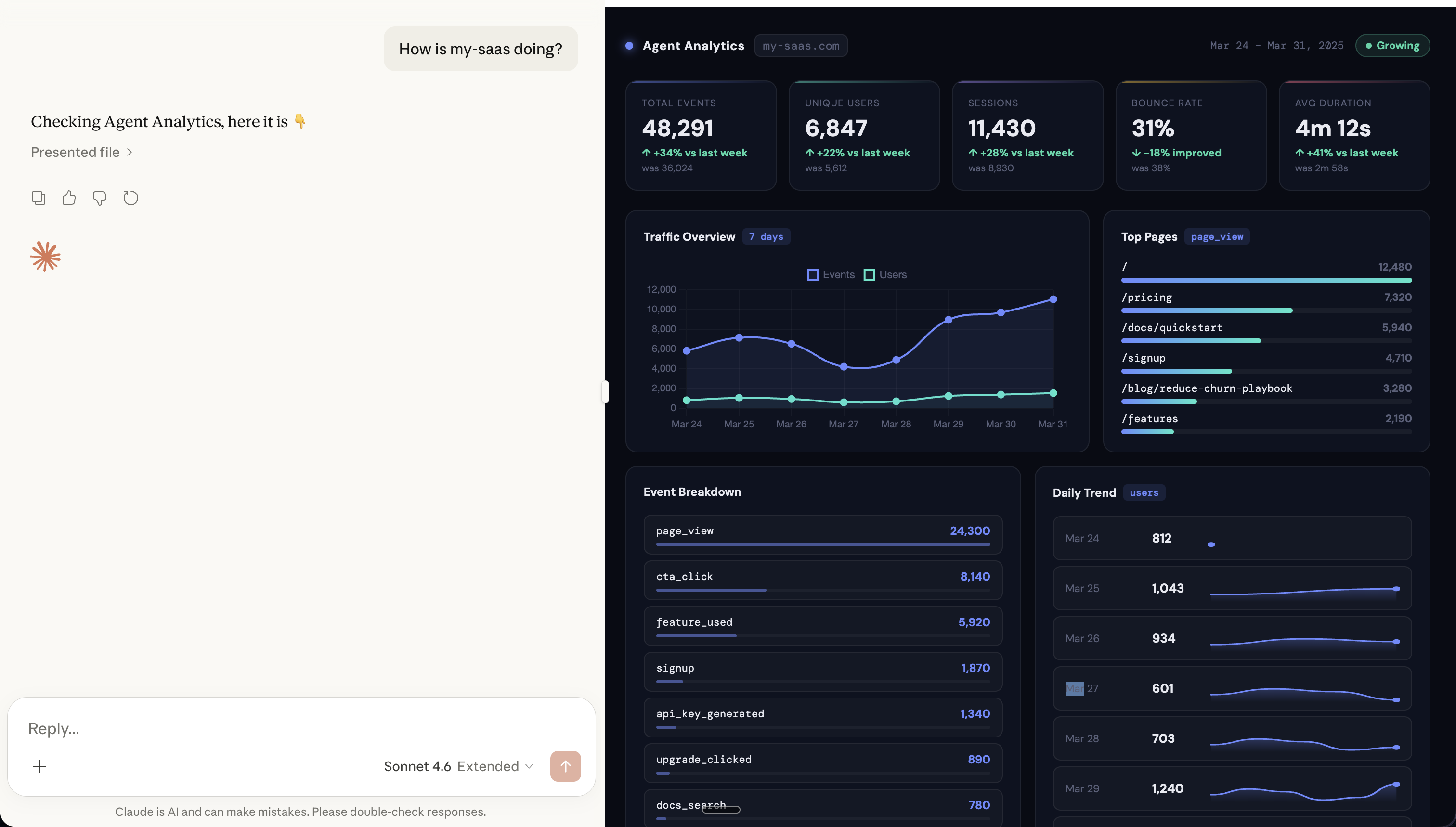

Your AI agent can answer analytics questions from quick counts to multi-step analysis across experiments, funnels, and retention. No dashboards.

Announcement

Add analytics to all your projects and let your AI agent track what's working. No dashboards. Just ask.

Guide

Updates, guides, and thoughts on analytics for products built, measured, and improved with AI agents.

Announcement

I was paying $28/mo to track pageviews across 5 side projects. I didn't want dashboards. I wanted my AI agent to handle it.

Story